For the machine to speak of its own voice

Inma Hernaez Rioja is professor at the Bilbao School of Engineering and founder of the research group Aholkularitza. The group was born in 1995 within the UPV/EHU and works on automatic speech in Basque. It has been a pretty quiet job so far. In fact, the echo and reception of the last project, | MyTTS, has been so great that they had to temporarily suspend the system.

Behind this success lies the work of many years and at the starting point a personal experience: Hernaez's sister lost her voice for an operation. And his career has been driven by helping him communicate with him and others. “My sister has been the first user of our developments. On one occasion they honored an intimate friend of their sister, who gave a small talk thanks to the app. Giving me that opportunity has given me a lot of strength to advance research.”

From its beginnings to the present, development has been remarkable. 20 years ago, to achieve good quality in voice synthesis techniques, it took many hours of recording a person. For example, to get Siri's voice, Hernaez said it would take about 30 hours. In addition, it had to be recorded in a studio with a very good quality.

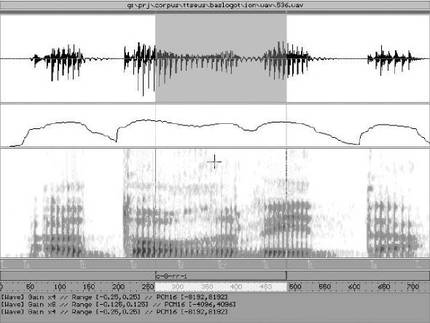

The technique was based on the “cut and paste” procedure. Not words or phrases were cut, but small units: diphonemes, triphonemes, vowels or consonants with the context... Subsequently, depending on the context, the algorithm determined which unit to choose from the database. “It was called corpus based synthesis. It was very expensive,” he said.

Utility and quality

The next step dates back to 2002 thanks to statistic-parametric synthesis. This technique consists, first of all, in parameterization of voice signals and, later, in the interconnection of these parameters through statistical treatment. He explains that the quality is not as good as the previous system, but with much less recording an acceptable result is achieved. In addition, its statistical character gives it great flexibility in adapting the statistical model to any sample.

This explains the process. “We have an average voice. That voice is not from anyone, it is made with many voices and speakers. They are not any speaker, they are usually professional speakers, the average voice is obtained from their recording. Then, this voice can be adapted to the voice of any user, for which it is enough to have a sample of a hundred sentences”.

In these one hundred sentences there are all sound combinations and the recording lasts half an hour. The advancement is evident, as it greatly facilitates anyone to have a synthetic voice adapted to their voice. “In fact, if we record more sentences we get better quality, but we can’t ask for more effort than that,” says Hernaez.

It has other limitations. For example, he is not able to express emotions: “Recording a corpus with emotions can be achieved, but in this project the sentences are neutral and we do not analyze the text to look for emotions. However, it can be put in the text, but we don’t.”

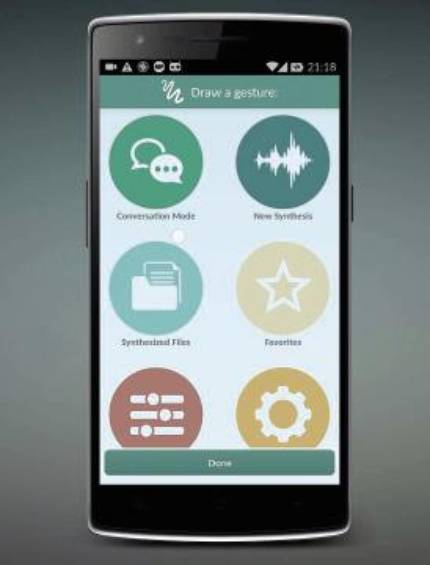

But the advantages are obvious: the synthesizer does not take memory, they have implemented it easily in Android, it works in real time on mobile... All this allows anyone to use it in their day to day. And that was the initial goal.

In fact, Hernaez has recognized that there are currently systems that offer higher quality, especially based on neural networks: “The current synthesis technology is very good. I would teach you some phrases and you would not be able to distinguish whether it is synthetic or natural. But we couldn’t get into Android or use it in real time.”

From laboratory to user

And that's the indentation: on a daily basis people who need a synthetic voice to communicate have to use the systems that are and are very limited. Thus, in many cases, women must use the human voice; or speakers of minority languages, the hegemonic language; or children, that of Siri. Hobe's goal has been to reduce the distance between the user's voice and that offered by the synthesizer.

It has made the developed system as comfortable and simple as possible for the user. The operation is as follows: “The user first records those 100 sentences, in Basque or Spanish, and an application is automatically created with its synthetic voice. You will then receive it by email and just click to download it to your Android mobile. Voice is recorded as a system voice, so you can use it not only in our communication app, but also in other applications such as reading books in many e-readers or in Adobe. And they can communicate in real time.”

For their part, people with very limited mobility use tablet and lever or iris reader. In this case they integrate it in Windows.

Users include people with amyotrophic lateral sclerosis. In principle, the project aims to help anyone who has lost their voice, but when the loss is sudden (for example, due to a stroke), it is difficult to get their synthetic voice. For their part, people with amyotrophic lateral sclerosis progressively lose their ability to move. Therefore, since they receive the diagnosis they have time to perform the recording and create their own synthetic voice.

In the last three or four years, together with Biocruces, they have worked with patients who had to undergo laryngectomy. “The laryngectomized can speak with the esophageal voice, but with this collaboration the doctors informed the patients to make the recording before the intervention. So then they had the application, because in the first few weeks they can’t talk,” explains Hernaez. He says that this collaboration has given a great boost to the project.

Now they continue to work to improve quality (they have a new algorithm) and to shorten the path that is made from the laboratory to the user. An effort is also being made to make the child's voice available, even in other languages, such as Catalan. Final objective: that all people who need it have the possibility to communicate through the synthetic voice in the most personalized, natural and simple way possible.

Buletina

Bidali zure helbide elektronikoa eta jaso asteroko buletina zure sarrera-ontzian